Models Datasets Spaces Buckets new Docs Enterprise Pricing --[0--> --]--> Back to Articles Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries Published March 10, 2026 Update on GitHub Upvote 145 +139 Amine Dirhoussi aminediroHF Follow Quentin Gallouédec qgallouedec Follow Kashif Rasul kashif Follow Lewis Tunstall lewtun Follow Edward Beeching edbeeching Follow Albert Villanova del Moral albertvillanova Follow Nouamane Tazi nouamanetazi Follow Leandro von Werra lvwerra Follow Sergio Paniego sergiopaniego Follow 1. major AI vendors are pulling the AI plan race into practical use: price, storage, stronger models, and bundle rights that land in everyday work. Hugging Face Blog is strong enough to treat the story as verified, but the useful part still lies in the context and practical impact.

Featured offer

Patrick Tech Store Open the AI plans, tools, and software currently getting the push Jump straight into the store to see what Patrick Tech is pushing right now.The upgrade worth noting

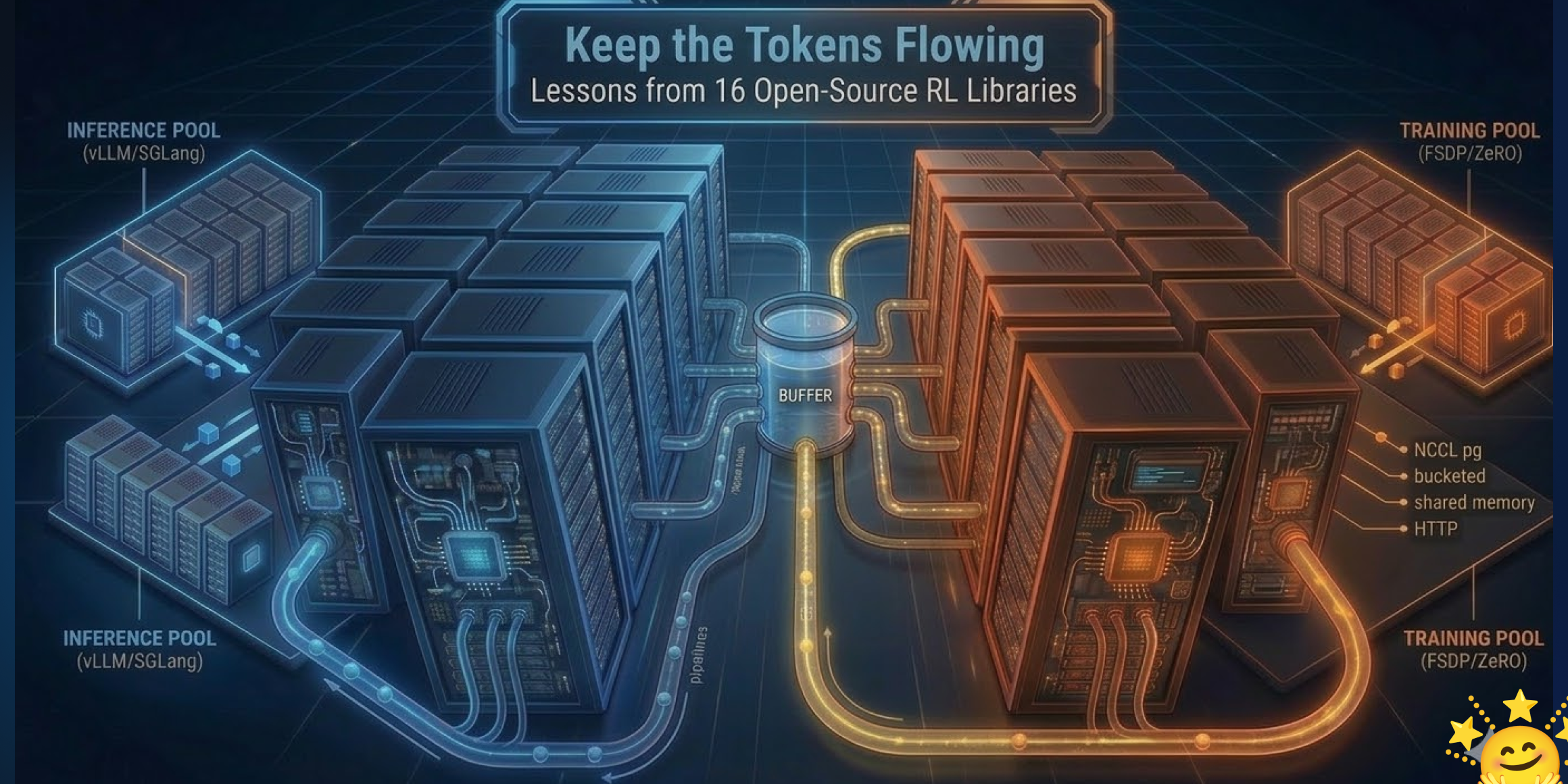

Models Datasets Spaces Buckets new Docs Enterprise Pricing --[0--> --]--> Back to Articles Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries Published March 10, 2026 Update on GitHub Upvote 145 +139 Amine Dirhoussi aminediroHF Follow Quentin Gallouédec qgallouedec Follow Kashif Rasul kashif Follow Lewis Tunstall lewtun Follow Edward Beeching edbeeching Follow Albert Villanova del Moral albertvillanova Follow Nouamane Tazi nouamanetazi Follow Leandro von Werra lvwerra Follow Sergio Paniego sergiopaniego Follow 1. Motivation: From synchronous RL training to async architectures 1. 1 How TRL Does RL Training Today 1. 2 Colocated vs. Disaggregated Training 1. 3 The Generation Bottleneck 1. 4 The Core Insight 2. The Comparison Framework: Seven Axes Axis 1: Orchestration & Concurrency Primitive Axis 2: Rollout Buffer Design Axis 3: Weight Synchronisation Protocol Axis 4: Staleness Management Axis 5: Partial Rollout Handling Axis 6: LoRA Training Support Axis 7: Distributed Training Backend & Parallelism 4. Global Overview: Sixteen Libraries at a Glance 5. The Next Wave: Design Implications 5. 1 Critic-Free Algorithms: Memory Freed, But Weight Sync Pressure Increases 5. 2 Process Rewards: A New Synchronisation Barrier 5. 3 Multi-Agent Co-Evolution: The Straggler Problem Compounds 5. 4 Training-Inference Mismatch: The Deepseek v3. 2 MoE Case Study 5. 5 Distillation: The Same Async Problem Under a Different Name 6. Design Choices for TRL's Async Trainer Design Principle: Keep Orchestration Lightweight model_version (No Double-Buffering)"> 1. Bounded Queue with Per-Token model_version (No Double-Buffering) 2. NCCL Weight Sync with Packed Transfers 3. Partial Rollout Support for Agentic Workloads TL;DR -- For those of you who don't have time to read 5,000 words about async RL plumbing (we get it, you have models to train):. Hugging Face Blog is strong enough to treat the story as verified, but the useful part still lies in the context and practical impact.

Where to look at price and bundle value

Models Datasets Spaces Buckets new Docs Enterprise Pricing --[0--> --]--> Back to Articles Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries Published March 10, 2026 Update on GitHub Upvote 145 +139 Amine Dirhoussi aminediroHF Follow Quentin Gallouédec qgallouedec Follow Kashif Rasul kashif Follow Lewis Tunstall lewtun Follow Edward Beeching edbeeching Follow Albert Villanova del Moral albertvillanova Follow Nouamane Tazi nouamanetazi Follow Leandro von Werra lvwerra Follow Sergio Paniego sergiopaniego Follow 1. On AI plans, the critical read is not just the extra terabytes on paper, but whether pricing stays stable, which model tier is actually unlocked, how tight the regional limits remain, and how clearly data privacy is promised.

Featured offer

Patrick Tech Store Open the AI plans, tools, and software currently getting the push Jump straight into the store to see what Patrick Tech is pushing right now.Which AI layers are lifting the plan

Motivation: From synchronous RL training to async architectures 1. 1 How TRL Does RL Training Today 1. 2 Colocated vs. Disaggregated Training 1. 3 The Generation Bottleneck 1. 4 The Core Insight 2. What makes this worth opening is that the bundled AI touches real tools like mail, docs, research, image generation, video, or note-taking instead of sitting as a standalone demo.

Who should pay attention

The readers who should watch most closely are the ones already paying for storage, docs, meetings, content creation, and AI at the same time. If one plan truly bundles those layers, the value will surface quickly. Readers using AI only for occasional prompts may still be fine on lighter or free tiers.

Patrick Tech Media take

Patrick Tech Media reads moves like this as a race for practical value. The plan that removes the need for extra side services, reduces switching between tools, and keeps AI quality stable will hold an advantage longer than the launch buzz. From 1 early signals, the piece keeps 1 references that are useful for locking the main details in place.

Context Worth Keeping

Models Datasets Spaces Buckets new Docs Enterprise Pricing --[0--> --]--> Back to Articles Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries Published March 10, 2026 Update on GitHub Upvote 145 +139 Amine Dirhoussi aminediroHF Follow Quentin Gallouédec qgallouedec Follow Kashif Rasul kashif Follow Lewis Tunstall lewtun Follow Edward Beeching edbeeching Follow Albert Villanova del Moral albertvillanova Follow Nouamane Tazi nouamanetazi Follow Leandro von Werra lvwerra Follow Sergio Paniego sergiopaniego Follow 1. major AI vendors are pulling the AI plan race into practical use: price, storage, stronger models, and bundle rights that land in everyday work. Hugging Face Blog is strong enough to treat the story as verified, but the useful part still lies in the context and practical impact. The important thing to keep in view is that the AI race is no longer only about model bragging rights; it is about practical value in daily work. The floor is firmer here because the story is anchored by an official source, not only by second-hand reaction.

Source notes

- Hugging Face Blog official-siteGlobal

From Patrick Tech

Contextual tools

AI Workspace Bundle for Digital Teams

A curated stack for writing, translation, summarization, and internal workflow speed.

Open Patrick Tech StoreCommunity

What did you think of this story?

Drop a reaction or leave a comment right below the article.

Related stories

Where Claude is moving upmarket: does Anthropic now win on code, project depth, or...

Anthropic is quieter than most of the field, but Claude plans now matter more because they touch coding, long-context...

"OncoAgent: A Dual-Tier Multi-Agent Framework for Privacy-Preserving Oncology...

The system routes clinical queries through an additive complexity scorer to either a 9B parameter speed-optimised...

Google Workspace Updates Weekly Recap: why teams are taking a closer look

On the “What’s new in Google Workspace?” Help Center page, learn about new products and features launching in Google...

Latest comments

0No comments yet. You can start the conversation.