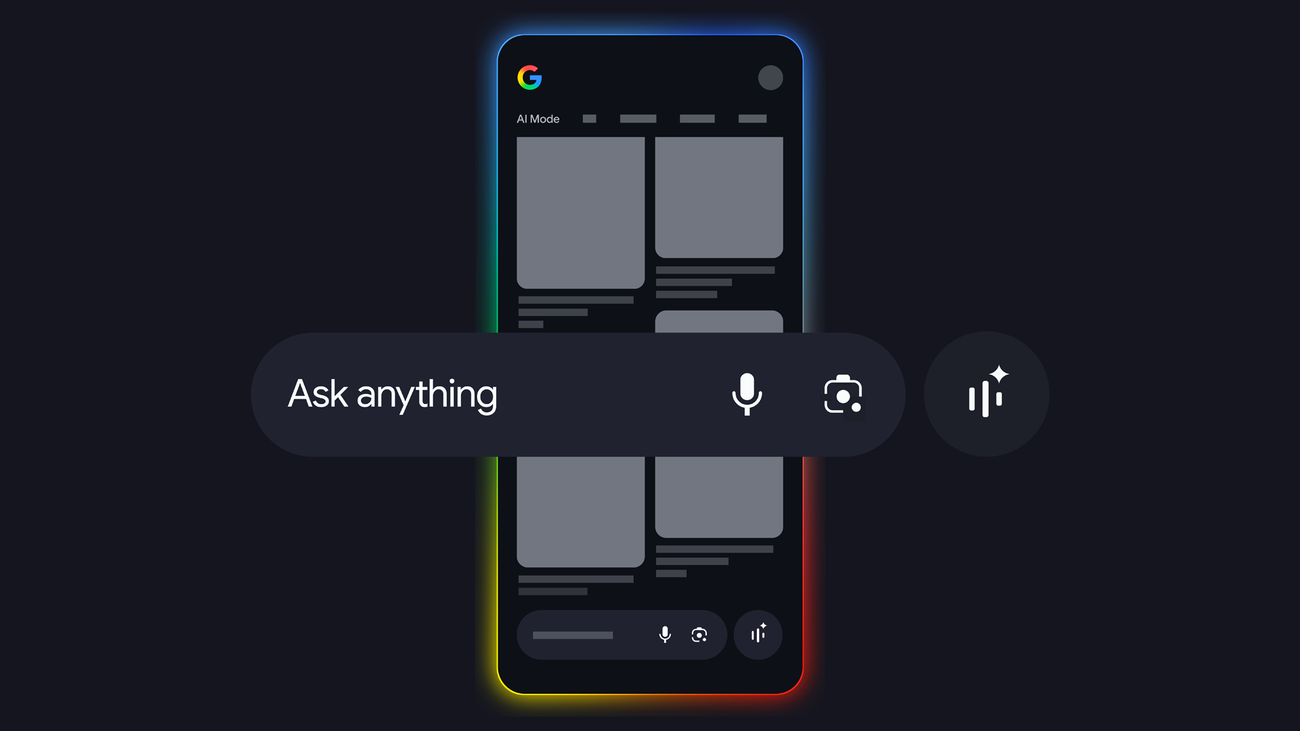

We’ve all been there: You see a photo of a perfectly styled living room or a well-curated street-style outfit, and you want to know where everything came from. Until recently, visual search was a one-item-at-a-time process. But a major update to Circle to Search and Lens now allows Google to break down and search for multiple objects within a single image simultaneously. Google Search Blog is strong enough to treat the story as verified, but the useful part still lies in the context and practical impact. On the device side, the useful angle is whether a technical change actually alters feel, lifespan, or upgrade cost in real use.

Featured offer

Patrick Tech Store Open the AI plans, tools, and software currently getting the push Jump straight into the store to see what Patrick Tech is pushing right now.What is happening now

We’ve all been there: You see a photo of a perfectly styled living room or a well-curated street-style outfit, and you want to know where everything came from. Google Search Blog form the main source layer behind the core facts in this piece. The floor is firmer here because the story is anchored by an official source, not only by second-hand reaction. With devices, practical impact usually shows up in battery life, heat, stability, and long-term usability rather than in a few flashy headline numbers.

Where the sources line up

Google Search Blog is strong enough to treat the story as verified, but the useful part still lies in the context and practical impact. Until recently, visual search was a one-item-at-a-time process. Google Search Blog form the main source layer behind the core facts in this piece.

Featured offer

Patrick Tech Store Open the AI plans, tools, and software currently getting the push Jump straight into the store to see what Patrick Tech is pushing right now.The details worth keeping

But a major update to Circle to Search and Lens now allows Google to break down and search for multiple objects within a single image simultaneously. On the device side, the useful angle is whether a technical change actually alters feel, lifespan, or upgrade cost in real use.

Why this matters most

This story is solid enough to treat the core shift as confirmed, so the better question is how far it travels and who feels it first. Even when the core is settled, the next useful read is still the rollout speed, the real impact, and the switching cost for users or teams. This means if you use Circle to Search on Android to search for an entire outfit, you’ll see results for every component of a look, not just one piece at a time.

What to watch next

The next readout is price, device coverage, and whether the change feels real once the hardware reaches users. Patrick Tech Media will keep checking rollout speed, user reaction, and how Google Search Blog update the next pieces. From 1 early signals, the piece keeps 1 references that are useful for locking the main details in place.

Context Worth Keeping

We’ve all been there: You see a photo of a perfectly styled living room or a well-curated street-style outfit, and you want to know where everything came from. Until recently, visual search was a one-item-at-a-time process. But a major update to Circle to Search and Lens now allows Google to break down and search for multiple objects within a single image simultaneously. Google Search Blog is strong enough to treat the story as verified, but the useful part still lies in the context and practical impact. On the device side, the useful angle is whether a technical change actually alters feel, lifespan, or upgrade cost in real use. With devices, the real difference rarely lives on the spec sheet; it lives in whether daily use becomes better or more annoying. The floor is firmer here because the story is anchored by an official source, not only by second-hand reaction.

Source notes

- Google Search Blog official-siteGlobal

Community

What did you think of this story?

Drop a reaction or leave a comment right below the article.

Related stories

Mercedes-Benz hypes up the upcoming AMG.EA as an electric car worth waiting for

Mercedes-AMG doesn’t do things quietly, and its latest behind-the-scenes video is a testament to that. The automaker...

Apple’s Continuity features are so good, they make Windows and Android feel...

Windows and Android platforms have been trying to catch up to Apple’s ecosystem . But replicating a feature here and...

The electric scooter rental company Lime has filed for IPO: why this signal is...

News EVs and Transportation The electric scooter rental company Lime has filed for IPO By Jackson Chen May 9, 2026...

Latest comments

0No comments yet. You can start the conversation.